Have you heard of the dead internet?

A reintroduction & thoughts on AI-generated everything

Lately, my feed’s been taken over by AI-generated everything. Videos, articles, posts, and even comments. It’s not just noise. It’s the quiet creep of what’s been called the Dead Internet.

The “Dead Internet Theory” suggests that a growing share of online content is no longer created by people. It’s generated by bots and large language models. I’m all for using AI to multiply creativity, but this isn’t that. This is content engineered for clicks, not meaning.

Researchers at Stanford analyzed over 300 million documents, from press releases to job listings, and since ChatGPT launched, there has been a massive spike in content generated or rewritten by AI, with an error rate of less than 3.3% in their detection. Odds are, you’re reading machine-written content every day without realizing it.

While democratization of AI for consumers has been transformative, it has also led to a proliferation of low-quality, repetitive content, often referred to as "AI slop".

What does this look like?

AI-generated YouTube videos with bot-written comments to boost engagement

Chrome extensions that auto-generate LinkedIn comments so you can “engage” without participating

The 1,271 unreliable AI - generated news, obituary and information websites, that are SEO optimized and filled with ads (tracked by Newsguard)

Surveys that are no longer reflecting human opinion, either skipped, auto-filled by bots, or completed by AI tools. Researcher Lauren Leek notes that “AI agents are learning to serve other AI agents,” based on data loops that don’t begin with humans.

A buzzword-heavy article that somehow never lands a point

Fake personas running Amazon shops with stolen content and auto-dubbed voice overs

Events that never existed, like the thousands of Dubliners waiting for a Halloween parade falsely promoted by an AI-generated website

AI-marketing that felt like a bait-and-switch compared to the execution of the Willy Wonka experience

What does this mean for us?

While the internet is certainly not dead yet, it’s filled with human creativity, thoughts and discourse, we’re witnessing the shifts happen as AI can create human-like texts, videos and audio.

Authenticity → Erosion of Trust: If we can't tell what's human, we stop trusting anything. That’s not just a media issue. It’s a brand, business, and societal problem.

Democratization of Content Creation → Content Fatigue: We’ve democratized content, but we’re drowning in it. Volume is up. Resonance and meaning are down.

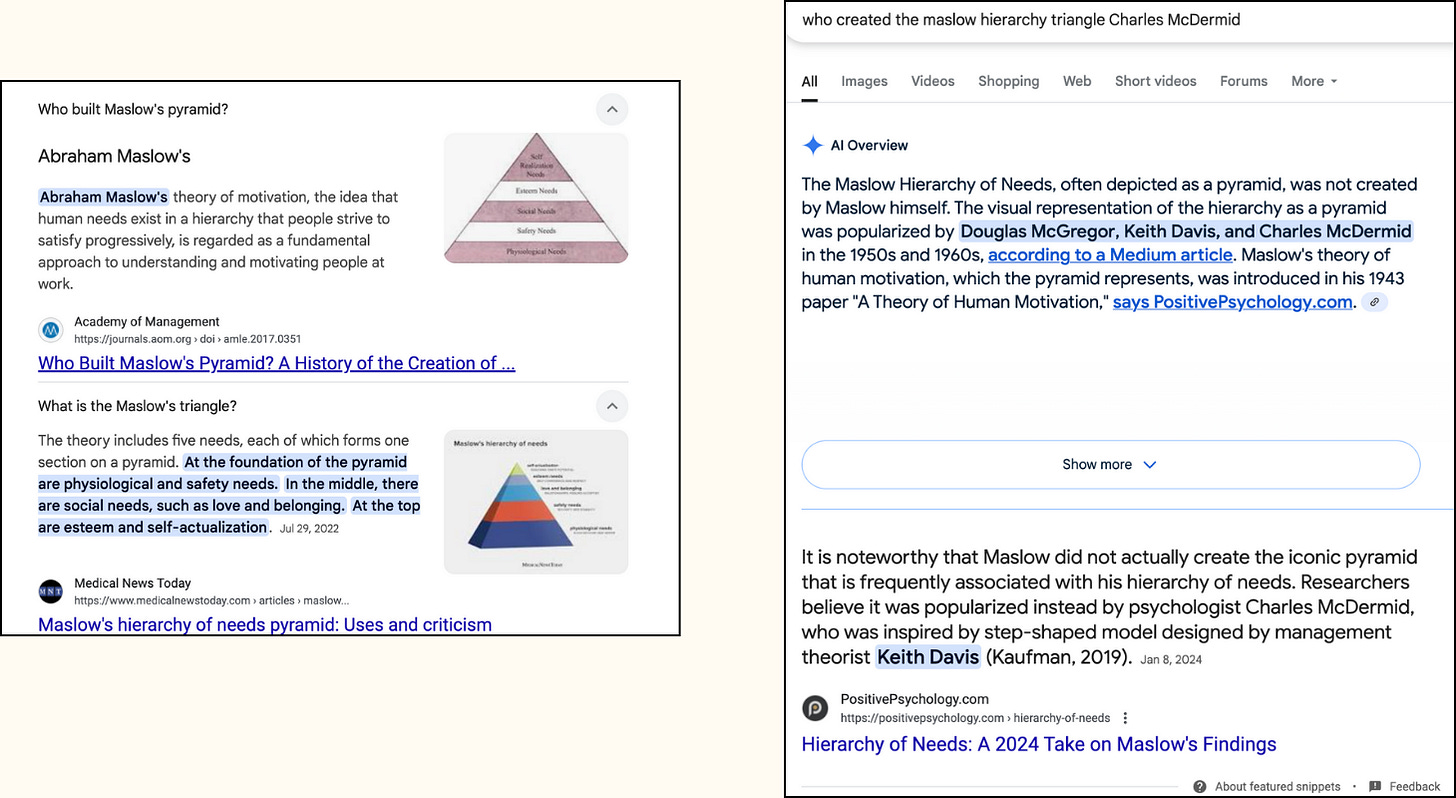

Automation → History Loss + Misinformation: Search is surfacing summaries, not sources. AI-generated content gets boosted, while original work gets buried. Looks get shared. Facts get lost.

If you ask Google who created the iconic triangle for Maslow’s hierarchy, it confidently says Maslow. But once you plug in the actual creator, Charles McDermid it quietly corrects itself. Thanks to Ovetta Sampson for posting about this.

What Dead Internet Theory Gets Wrong

The internet isn’t dead. But it is different and it keeps changing. And we need a more precise diagnosis than doom.

1. Human content hasn’t vanished. It’s being buried.

People are still creating, connecting, and showing up online. But in a system optimized for speed and sameness, what’s real gets deprioritized. What’s visible isn’t always what’s valuable.

2. Not all automation is slop.

The problem isn’t that AI is in the loop, it’s how we’re using it. There’s a difference between generating noise and designing signal. We should be evaluating intent, not just output.

3. This isn’t a conspiracy. It’s an outcome.

No shadowy force killed the web. It’s death-by-design, an internet shaped by engagement metrics, SEO economics, and an attention market that rewards repetition over resonance. That’s incentive structure that’s misaligned with what our original plot of the internet was.

And so the bigger questions I’m asking myself and my team are:

When algorithms are optimizing content for each other (AI recommending AI), are we still consuming what we truly want, or just what one model predicts another will prefer?

In a feed flooded with algorithmic sameness, how do we create space for creative signals that come from lived experience, creative friction, cultural context, real connection, or even contradiction?

What bold new possibilities are we overlooking because we’ve undervalued human imagination, care, and context in the age of automation?

Things I’ve clicked lately:

Atlantic’s piece on healthcare inequality and anti-science conspiracy

The Guardian on dissonance: “systems are crumbling, but daily life continues”

Next up on Unmissables: Tameka Vasquez

I sat down with Tameka Vasquez—a futurist, business strategist, and Founder of The Future Quo.

Her work turns foresight into a functional tool, not a buzzword. We talk about how futures thinking became her calling, why clarity can’t live in a silo, the trap of efficiency culture, and the power of holding space for multiple truths.

If you’ve been caught between what’s urgent and what’s essential, this episode is for you.

The signal is getting harder to hear, but it’s still there.

The rise of AI content doesn’t mean the end of the internet. But it does mean we have to be more intentional about what we create, what we consume, and what we amplify.

That’s what I’m doing with Unmissables, making space for conversations, questions and tensions. Season 2 is live. And it’s sharper, deeper, and more necessary than ever.

In a world of over-optimization, I’m genuinely excited about creating again, under-optimized, hyper-curious and ever evolving.

Tune in: Spotify || Apple Podcast || Substack || Youtube

If you’re new here, or just want to see what I’ve been up to, I reintroduced myself here. Come say hi.

More soon,

Ariba

What an exciting reintroduction, Ariba. I look forward to your insights and the 'what you clicked on' section. The graphics and brand look incredible as well. Share your secrets!